About the Project

Heat Death of the Metaverse is a real-time 3D motion capture music video developed in Unreal Engine 5 for QUT's KNB227 - CGI Technologies course. It explores themes of digital existence and technological entropy through a cinematic, visually striking music video.

The project utlises real-time rendering and motion capture pipelines within UE5. My role as CG Artist meant I was responsible for creating and refining the visual assets and 3D environment. As Project Manager I coordinated the team's workflow, and as Editor I assembled the final cut.

Working with motion capture data in real-time 3D was a distinctive challenge - cleaning, retargeting, and integrating mocap data into a cohesive cinematic piece required both technical precision and creative sensitivity.

A summary of the development process is detail below, however, you can read more about the full process on the blog site for this project.

My Process

Research, Mood Boards & Concept Art

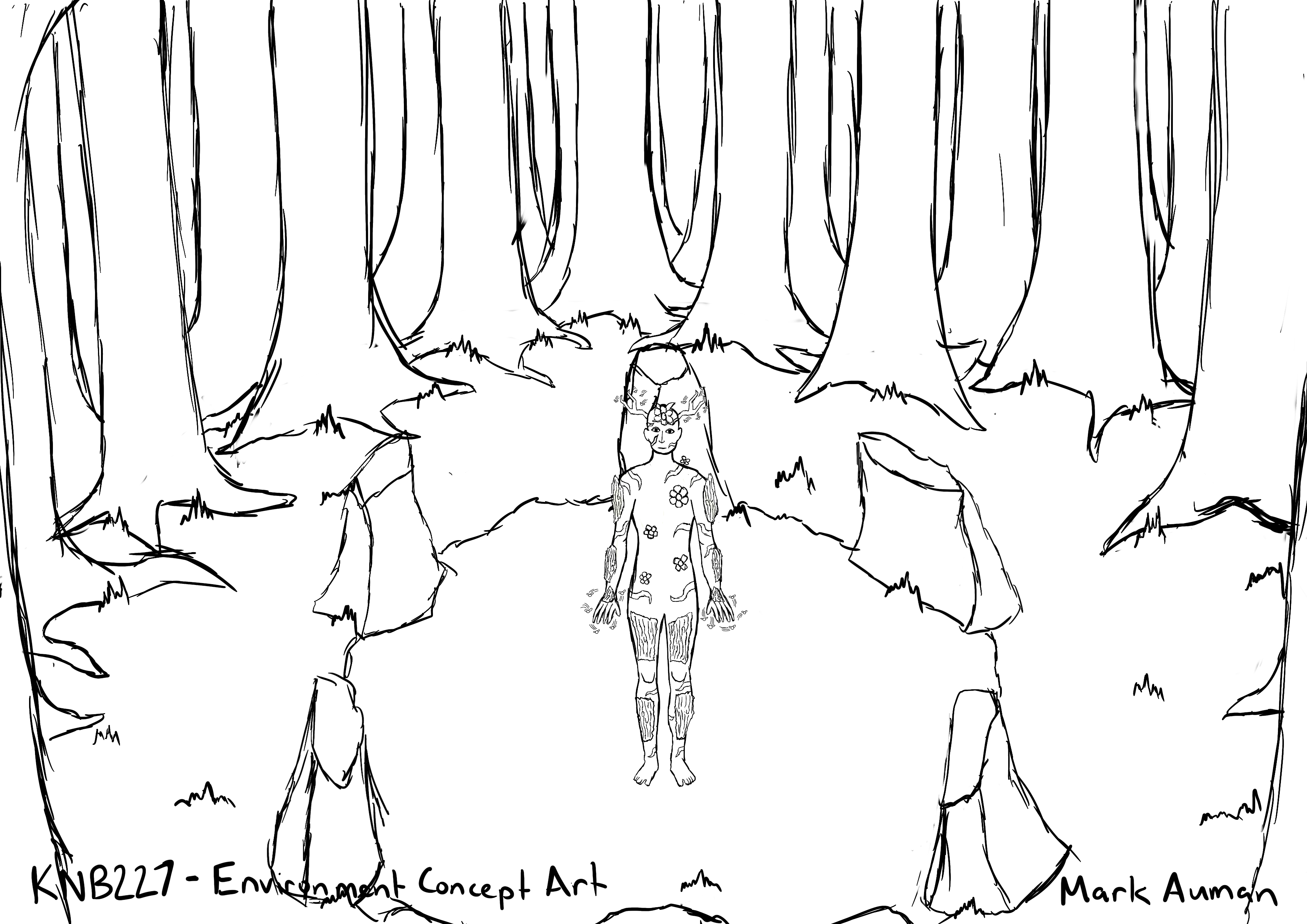

KNB227 ran in two phases. For the individual phase, each student had to conceptualise and develop their own Metahuman character and a performance environment to go with it. I was immediately drawn to a woods aesthetic - Unreal Engine has an extensive library of forest and woodland assets, which meant I could produce a high-quality environment without having to build every element from scratch.

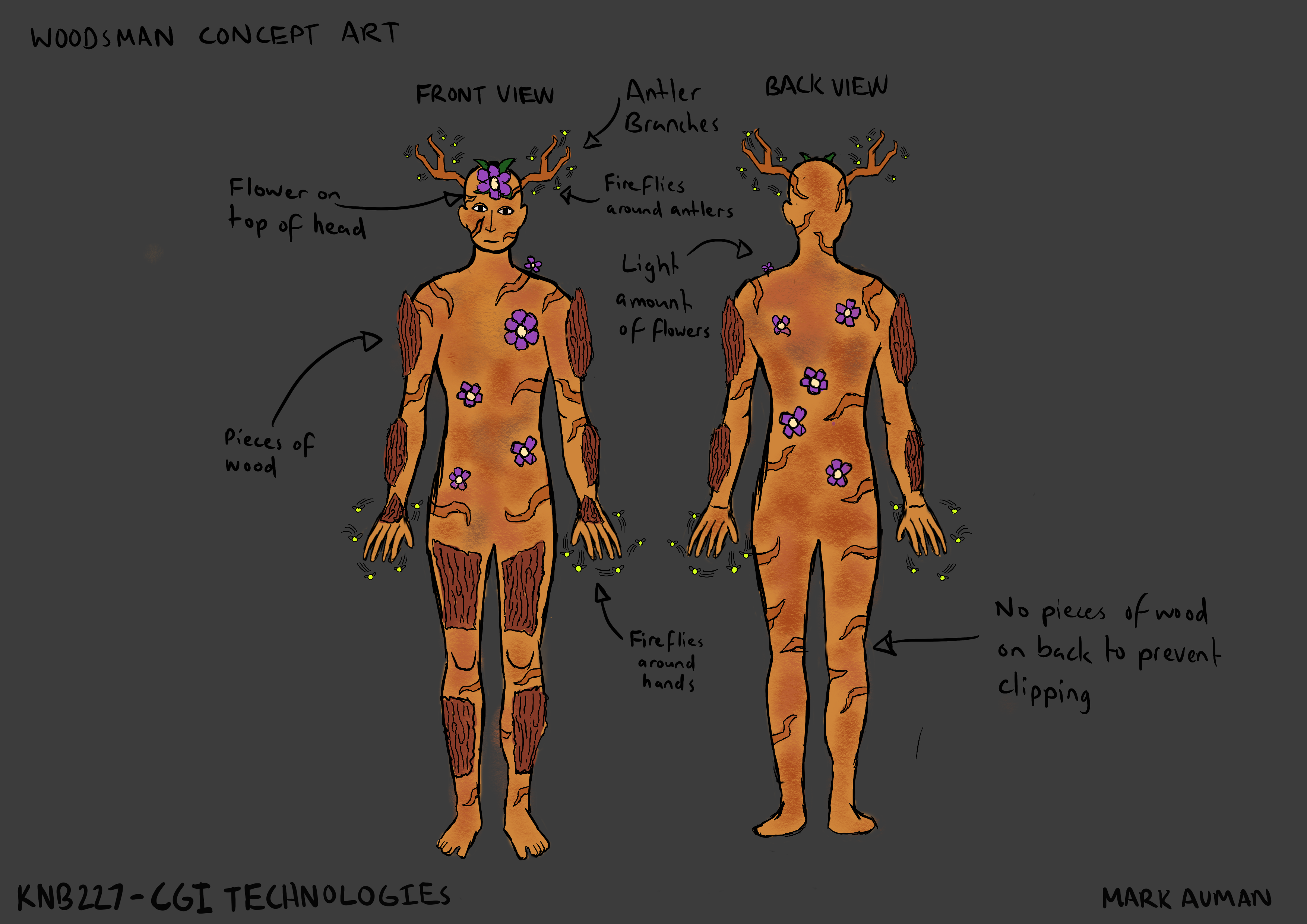

I created moodboards covering the overall visual direction, and produced concept art for the character - working through thumbnail sketches before developing coloured character designs. These established the look of The Woodsman: a mysterious, otherworldly figure suited to the woods setting. The concept work informed every subsequent asset decision in the individual phase.

Initial Metahuman & Environment Development

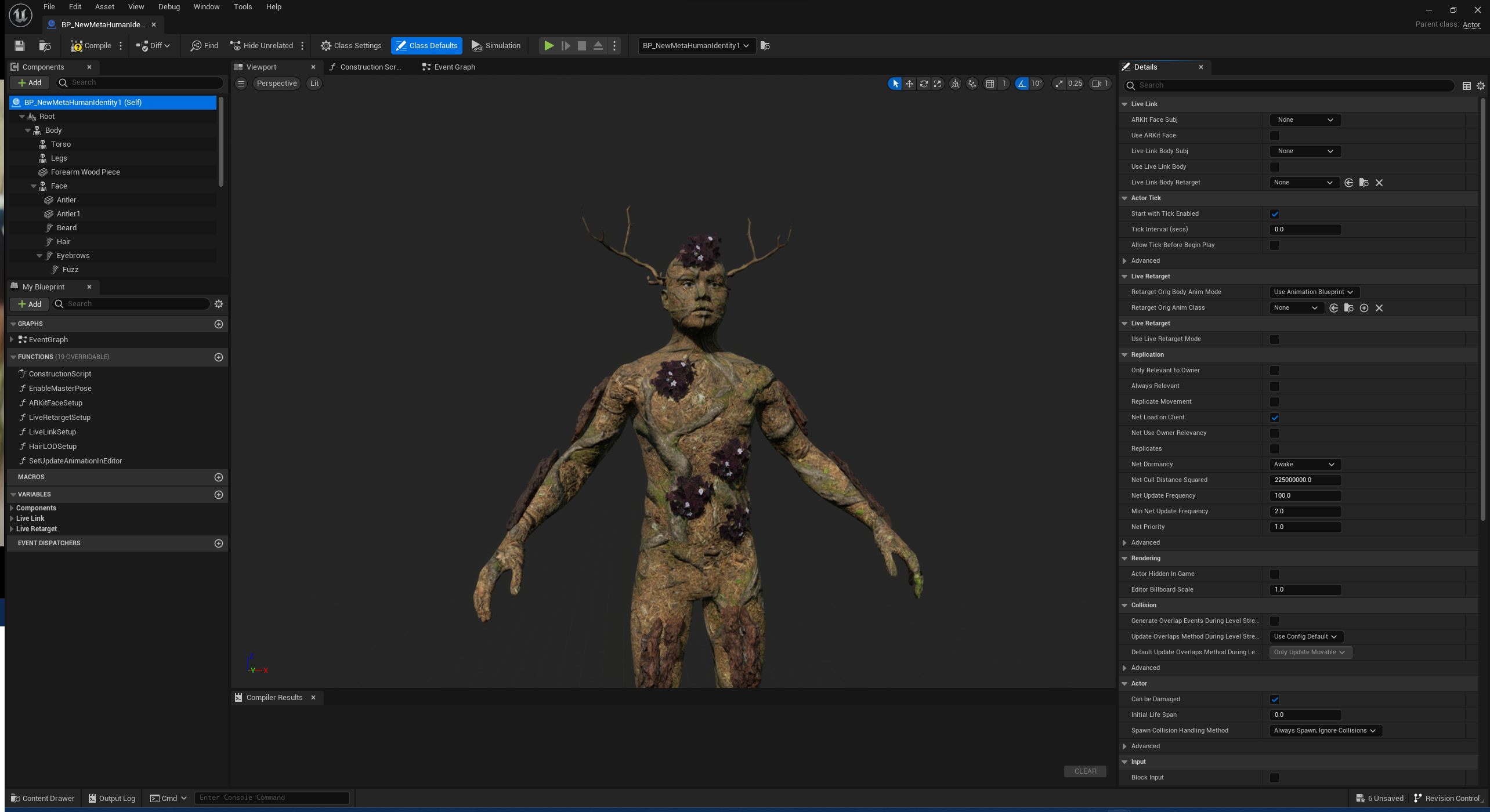

I created The Woodsman in Unreal Engine 5's Metahuman Creator system - a browser-based tool that generates highly detailed, photorealistic human characters. The level of fidelity achievable was striking, and the system itself was more accessible than I expected given the quality of the output.

The woods environment was built using Quixel Megascan assets - a library of photogrammetry-scanned real-world materials and objects that integrates directly into Unreal Engine. Drawing on experience from previous units, I assembled the environment to complement The Woodsman's visual identity and create a moody, atmospheric space suited to the planned cinematic performance.

Learning How to Clean Up & Apply Motion Capture Data

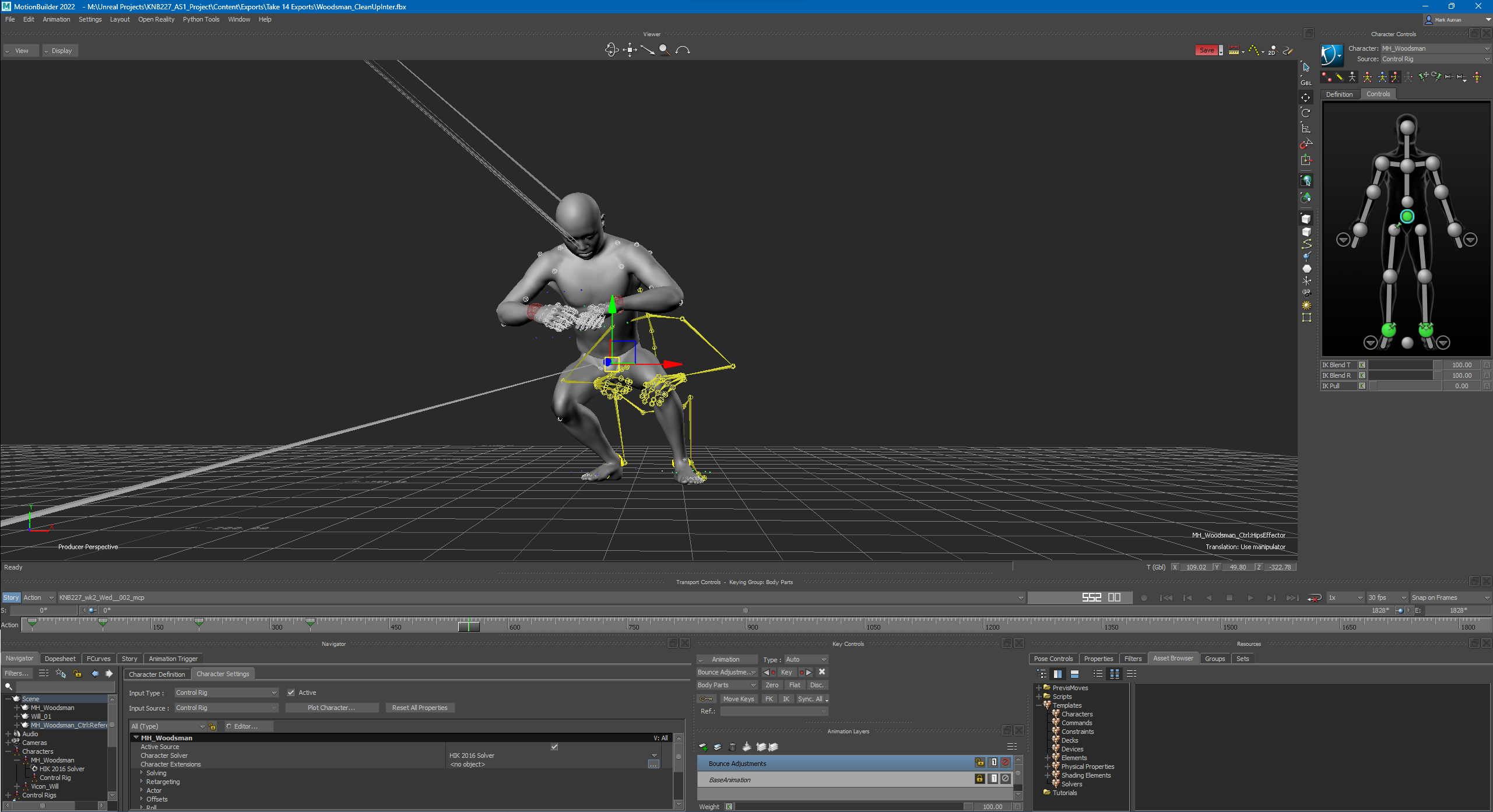

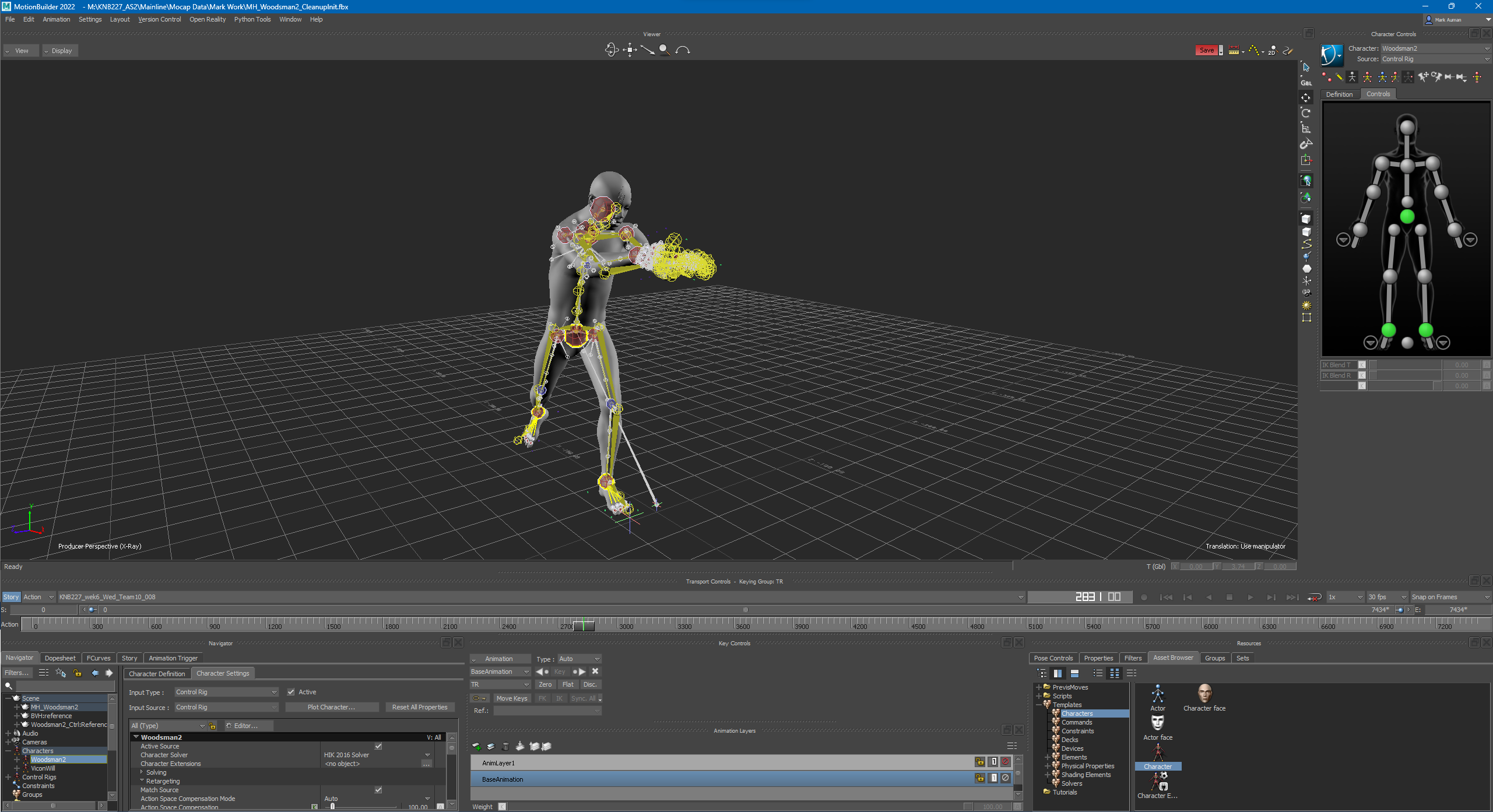

A central part of KNB227 was learning the motion capture pipeline - from recording through to a finished render. The pipeline ran through four stages: capture in a volume space, processing in Shogun, clean-up in Motion Builder, and final export into Unreal Engine 5.

Through the unit's studio sessions I learned how to clean up raw mocap data - correcting artefacts, addressing foot sliding, and tidying knee bends in Motion Builder. I produced a proof-of-concept render applying cleaned motion capture data to The Woodsman, confirming the pipeline worked end-to-end before moving into the group phase.

Team & Project Management

In the group phase, five students combined their Metahumans into a shared Unreal project to produce the full music video. I took on the role of project manager - coordinating the team's workflow, tracking progress across segments, and keeping the project on schedule toward the submission deadline.

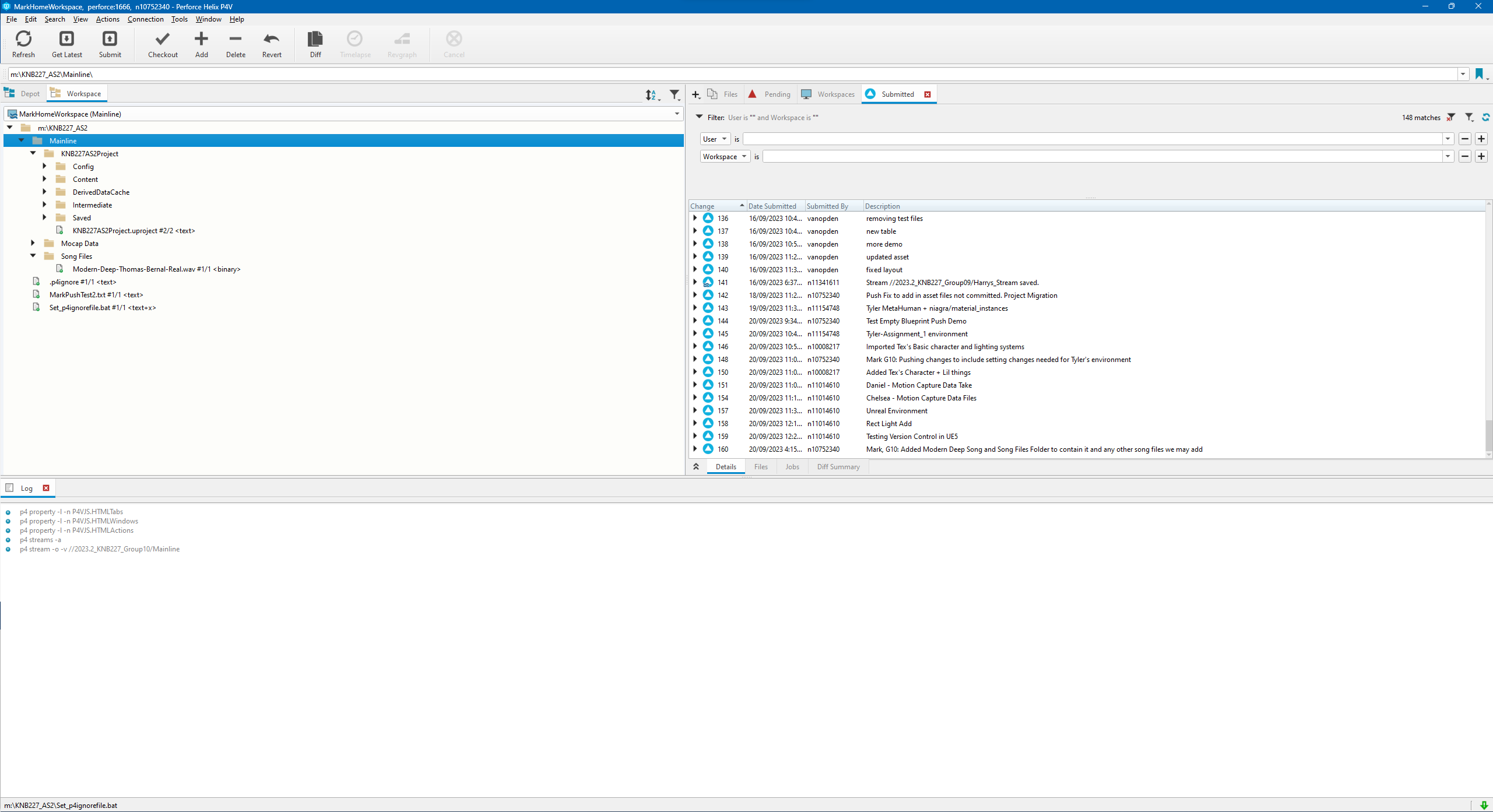

One of my first responsibilities was establishing version control for the team. I set up and managed a Perforce repository for the shared Unreal project. This was a new experience as my previous projects had used Git and GitHub. Perforce operates quite differently, particularly in how it handles large binary assets that version control systems like Git struggle with. Learning to manage a Perforce depot was a practical introduction to the tooling commonly used in larger game and film production environments made with Unreal.

Further Metahuman, Motion Capture & Environment Work

Further into the group phase I cleaned bespoke motion capture performance data for The Woodsman's segment of the video - paying particular attention to foot placement and knee bend correction in Motion Builder to ensure the performance read as natural on the character rig.

I customised the shared environment for The Woodsman's segment, adding trees and environmental elements drawn from my individual phase concept work to give the space a distinct character within the broader video. I also refined The Woodsman himself - adding particle effects and glowing eyes to strengthen his visual presence on screen.

With the performance data applied to The Woodsman and the segment rendered, I then edited all five team members' sequences together into the final music video, cutting the footage to the track and producing the finished submission.